Computational Friction: When Systems Wait, the Grid Pays

Before we build the next trillion dollars of data centers, we should ask how much compute is compensating for architectural data distance.

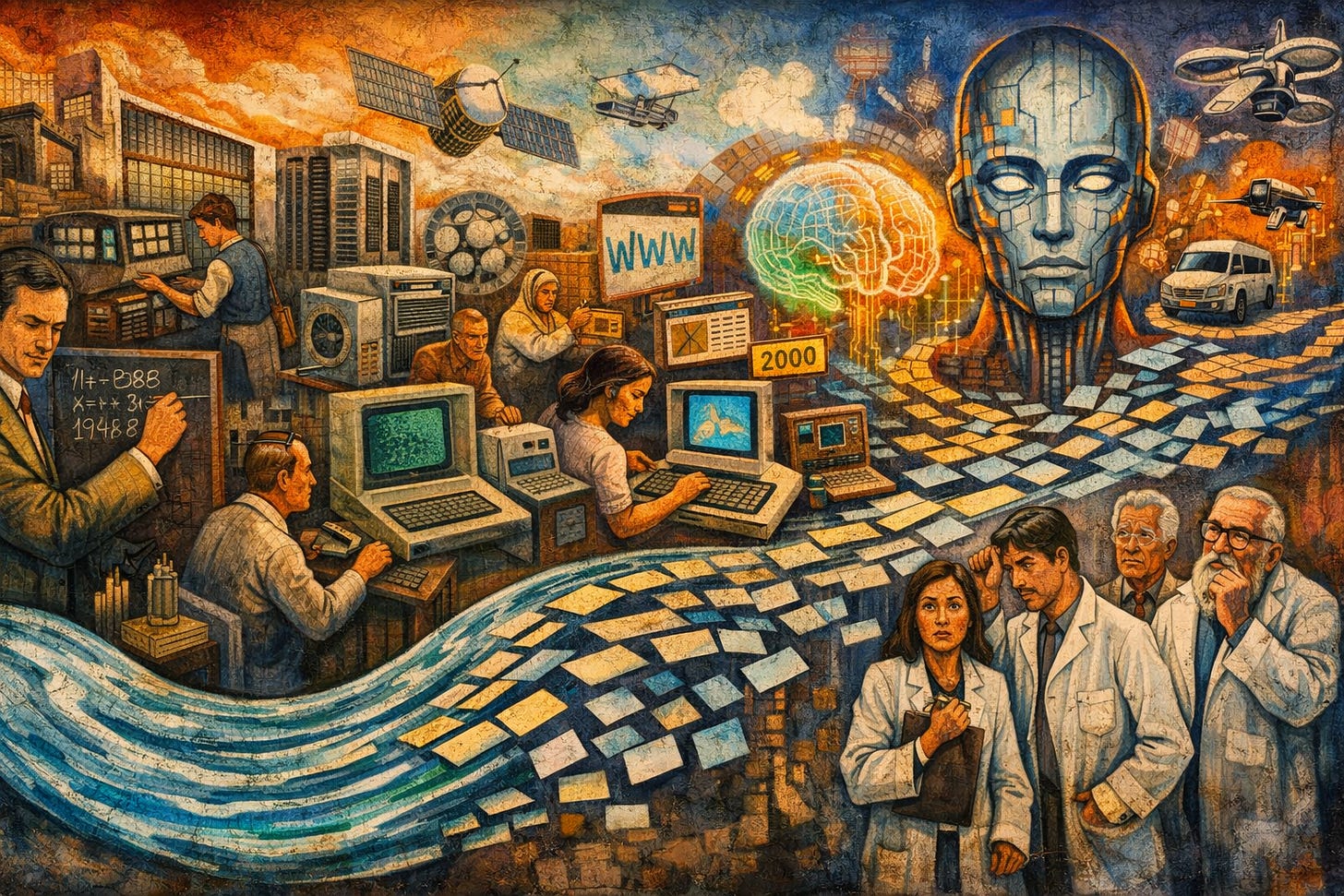

For more than twenty-five years, I tuned JVM-based application stacks “in the trenches.” Many were built in ColdFusion. Some in Java. Some in Groovy frameworks. I even had a long Scala conversation with a senior Twitter engineer.

The language was rarely the problem.

The symptom was almost always the same: CPU saturation, threads backed up, users complaining, and infrastructure teams recommending more hardware.

And the diagnosis was almost never CPU.

When systems slow under load, the first thing people see is high utilization. Dashboards glow red. CPU graphs spike. Memory pressure rises. Thread pools fill. The conclusion arrives quickly:

“We need more servers.”

Sometimes that works — briefly. Add instances. Add memory. Increase the pool. The system breathes again. Then traffic grows and the same problem returns.

This feels uncomfortably similar to our current AI infrastructure moment — except now the “more servers” reflex looks like more data centers.

What was really happening

Over and over, performance issues traced back to the data path:

poorly indexed tables

OLTP systems used for analytical queries

ORM layers issuing dozens of chatty queries per request

lock contention under concurrency

remote disk I/O

connection pool starvation

cross-network round trips

analytics workloads running against transactional engines

Application servers were not “slow.”

They were waiting.

Waiting on disk. Waiting on locks. Waiting on the network. Waiting on architecture.

The database wasn’t merely a storage system. It was the governor of throughput. And when load increased, waiting amplified.

Computational friction

Computational friction is the excess compute consumed compensating for architectural inefficiency.

It shows up as:

repeated queries instead of cached results

serialization/deserialization loops

lock retries under contention

network chatter between tiers

schema translation overhead

rebuilding state repeatedly

In physical systems, friction produces heat.

In computational systems, friction produces wasted cycles — which become wasted energy — which becomes cooling, racks, power infrastructure, and capital expenditure.

Under friction: data distance

Underneath computational friction lies something deeper: data distance.

Data distance isn’t only geography. It includes:

network hops and storage latency

cross-region replication and synchronization

semantic mismatch between systems

API layers wrapping legacy systems

organizational silos that prevent coherent modeling

The further computation is from the data it depends on — physically or structurally — the more energy is required to perform the same task.

In JVM environments, this manifested clearly:

thread dumps full of JDBC waits

GC pauses amplified by object churn

load tests collapsing under moderate concurrency

“scaling” the app tier without fixing the data tier solving nothing

Adding hardware masked friction. It did not eliminate it.

From web apps to AI agents: the physics didn’t change

Fast forward to today.

We are witnessing extraordinary growth in AI infrastructure: GPU clusters expand, data centers multiply, and power-demand projections rise dramatically. Yet we also see AI deployments stall inside organizations — not because models are weak, but because the data path is fragmented.

Modern AI agents depend on:

legacy RDBMS stores

CRM and ERP systems

analytics warehouses

vector stores

multiple APIs across departments

Instead of a web application waiting on a database, we now have AI agents waiting on enterprise data.

The model is not the only heavy component.

The data path is.

And the physics has not changed — only the scale has.

If each AI request burns extra cycles compensating for fragmented, poorly structured, or distant data, what happens at planetary scale?

More GPUs. More racks. More cooling. More substations. More transmission lines. More data centers.

Some expansion is genuine. Training large models is compute-intensive. Inference at scale requires real resources.

But how much of the curve is architectural friction?

In enterprise environments, it was often easier to procure hardware than refactor schemas. Politically simpler to scale horizontally than to redesign deeply. That dynamic may now be unfolding at hyperscale.

Even a modest increase in compute per request — multiplied across billions of daily interactions — becomes substantial energy demand.

The recurring mistake: mixing OLTP and OLAP

One recurring pattern over decades of tuning was the conflation of transactional and analytical workloads.

Transactional systems optimize for atomicity, concurrency, and small consistent writes.

Analytical systems optimize for aggregation, large scans, and historical computation.

When the two are mixed, both suffer.

Today’s equivalent may be AI agents querying operational systems directly for real-time reasoning while analytical systems attempt to reconstruct context asynchronously. If semantic structure is not aligned and data locality is not respected, friction accumulates.

The result is not only latency.

It is compute inflation.

Is this the ultimate power trip?

Before we build the next trillion dollars of data centers, it may be prudent to ask:

Are we building capacity for intelligence — or compensating for architectural friction?

This is not an argument against AI.

It is an argument for architectural literacy.

Reducing computational friction does not require abandoning innovation. It requires revisiting fundamentals:

data locality and topology-aware design

clean separation of OLTP and OLAP

coherent schema design

event-driven architectures where appropriate

intelligent caching strategies

minimizing unnecessary translation layers

These principles are not glamorous. They do not produce headlines.

But they reduce friction.

And reducing friction is often cheaper — and more sustainable — than generating more energy to overcome it.

ColdFusion was simply one chapter in a longer story. ColdFusion is often short-formed as “CF,” which I now also read as Computational Friction.

Over years of JVM tuning, one principle became clear:

Throughput is governed less by processor speed than by the structural distance between computation and data.

That principle hasn’t changed.

Only the scale has.

In a world where AI infrastructure decisions shape energy grids and capital markets, architectural inefficiency is no longer merely a performance issue.

It is an infrastructure decision.

REST

(Representational State Transfer), as articulated by Roy Fielding around 2000, formalized key constraints:

REST was a major step forward and yet it also has palpable performance impacts.

Stateless interactions

Uniform interface

Resource-based addressing

Client-server separation

Cacheable responses

Layered systems

Statelessness was not an accident.

It was the point. Each request must contain all the information necessary to understand it.

That constraint enabled: Horizontal scalability; load balancing; intermediaries; global distribution; it was architecturally brilliant for the web.

But every architectural decision has consequences.

In REST, the server does not remember your context.

So each request must: Re-identify the user - Re-authorize - Re-fetch state - Reassemble context - Re-validate data

Under light load, this is fine. Under hyperscale, this becomes reconstruction overhead.

In REST systems:

Every sentence must reintroduce the conversation. That increases compute. REST increases semantic distance. Why? Because resources are abstracted into representations:

JSON payloads - XML documents - Serialized objects.

Between client and database now sit:

Controllers - Services - Mappers - ORM layers - Serialization libraries

Each layer consumes cycles and each layer introduces transformation cost. In addition each layer adds friction.

The database is now multiple abstractions away from the consumer and this is architectural layering for scalability. But layering increases distance and distance increases compute.

REST combined with microservices amplified this dramatically.

Instead of: App → DB

We now have:

Service A → Service B → Service C → DB

Service A → Service D → Service E → Cache → DB

Each hop:

Serializes - Deserializes - Authenticates - Validates - Logs - Transforms

Under scale, that becomes enormous. Although microservices solved organizational scaling, they often increased computational friction.

REST Was Optimized for Distribution, Not Locality; this is a key insight.

AI agents typically operate in RESTful ecosystems. We will revisit this is our next article.

Closing

These reflections are based on thirty years working in technology with hundreds of clients and thousands of systems — on-prem and cloud — worldwide.

As I often tell clients: if I have anything useful to contribute, it is having seen the same patterns in so many different places.

Thanks for reading — there will be more.